Welcome to Polygon Aggkit Tech Docs

Welcome to the official documentation for the Polygon Aggkit. This guide will help you get started with building and deploying rollups to the Agglayer.

Setup environment to local debug on VSCode

Requirements

- Working and running kurtosis-cdk environment setup.

- In

test/scripts/env.shsetupKURTOSIS_FOLDERpointing to your setup.

Tip

Use your WIP branch in Kurtosis CDK as needed

1. Create configuration for this kurtosis environment

scripts/local_config

2. Stop the aggkit node started by Kurtosis CDK

kurtosis service stop aggkit cdk-node-001

3. Add to vscode launch.json

After execution of scripts/local_config, it suggests an entry for launch.json configurations

AggOracle Component

Overview

The AggOracle component ensures the Global Exit Root (GER) is propagated from L1 to the L2 sovereign chain smart contract. This is critical for enabling asset and message bridging between chains.

The GER is indexed from the L2 smart contract by L2GERSyncer component and persisted in local storage.

The AggOracle supports two operational modes:

- Direct Injection Mode: Direct injection of GERs into the L2 GER Manager contract

- AggOracle Committee Mode: Consensus-based GER injection through a committee of oracle members

Key Components:

ChainSender: Interface for submitting GERs to the smart contract.EVMChainGERSender: An implementation ofChainSenderinterface supporting both operational modes.

Workflow

What is Global Exit Root (GER)?

The Global Exit Root consolidates:

-

Mainnet Exit Root (MER): Updated during bridge transactions from L1.

-

Rollup Exit Root (RER): Updated when verified rollup batches are submitted via ZKP.

GER = hash(MER, RER)

Operational Modes

1. Direct Injection Mode

In this mode, the AggOracle directly injects GERs into the L2 GER Manager contract:

- Fetch Finalized GER: AggOracle retrieves the latest GER finalized on L1.

- Check GER Injection: Confirms whether the GER is already stored in the smart contract.

- Direct Injection: If missing, AggOracle directly submits the GER via the

insertGlobalExitRootfunction. - Sync Locally: L2GERSyncer fetches and stores the GER locally for downstream use.

2. AggOracle Committee Mode

In this mode, GER injection requires consensus from a committee of oracle members:

- Fetch Finalized GER: AggOracle retrieves the latest GER finalized on L1.

- Check GER Status: Confirms whether the GER is already injected or proposed.

- Propose GER: If not yet proposed, committee member submits the GER via

proposeGlobalExitRootfunction to theAggOracleCommitteecontract. - Committee Proposals: Other committee members submit the same GER via

proposeGlobalExitRootto signal agreement. - Automatic Injection: Once quorum is reached, the GER is automatically injected into the L2 GER Manager contract.

- Sync Locally: L2GERSyncer fetches and stores the GER locally for downstream use.

Committee Consensus Mechanism

- Committee Members: A predefined set of authorized oracle addresses

- Quorum: Minimum number of votes required for GER injection

- Proposal Tracking: Each member’s latest proposed GER is tracked; members cannot re-propose the same GER

- Proposal Counting: The committee contract track proposals for each GER

- Automatic Execution: GER injection happens automatically when quorum is reached

The sequence diagrams below depict the interactions in both operational modes.

Direct Injection Mode:

sequenceDiagram

participant AggOracle

participant ChainSender

participant L1InfoTreeSyncer

participant L2GERManager

AggOracle->>AggOracle: start (Direct Injection Mode)

AggOracle->>AggOracle: process latest GER

loop trigger on preconfigured frequency

AggOracle->>L1InfoTreeSyncer: get latest finalized GER

L1InfoTreeSyncer-->>AggOracle: return GER from L1 info tree

AggOracle->>ChainSender: ProcessGER

ChainSender->>L2GERManager: check if GER injected

L2GERManager-->>ChainSender: GER injection status

alt GER already injected

ChainSender->>ChainSender: log GER already injected

else GER not injected

ChainSender->>L2GERManager: insertGlobalExitRoot(GER)

L2GERManager-->>ChainSender: transaction result

end

end

AggOracle->>AggOracle: handle GER processing error

AggOracle Committee Mode:

sequenceDiagram

participant AggOracle

participant ChainSender

participant L1InfoTreeSyncer

participant AggOracleCommittee

participant L2GERManager

participant OtherCommitteeMembers

AggOracle->>AggOracle: start (Committee Mode)

AggOracle->>AggOracle: process latest GER

loop trigger on preconfigured frequency

AggOracle->>L1InfoTreeSyncer: get latest finalized GER

L1InfoTreeSyncer-->>AggOracle: return GER from L1 info tree

AggOracle->>ChainSender: ProcessGER

ChainSender->>L2GERManager: check if GER injected

L2GERManager-->>ChainSender: GER injection status

alt GER already injected

ChainSender->>ChainSender: log GER already injected

else GER not injected

ChainSender->>AggOracleCommittee: check if GER proposed

AggOracleCommittee-->>ChainSender: proposal status

alt GER already proposed

ChainSender->>ChainSender: log GER already proposed

else GER not yet proposed

ChainSender->>AggOracleCommittee: proposeGlobalExitRoot(GER)

AggOracleCommittee->>AggOracleCommittee: record proposal

OtherCommitteeMembers->>AggOracleCommittee: other members proposeGlobalExitRoot(GER)

AggOracleCommittee->>AggOracleCommittee: record additional proposals

alt quorum reached

AggOracleCommittee->>L2GERManager: insertGlobalExitRoot(GER)

L2GERManager-->>AggOracleCommittee: injection result

else quorum not reached

AggOracleCommittee->>AggOracleCommittee: wait for more votes

end

end

end

end

AggOracle->>AggOracle: handle GER processing error

Key Components

1. AggOracle

The AggOracle fetches the finalized GER and ensures its injection into the L2 smart contract using the configured operational mode.

Functions:

Start: Periodically processes GER updates using a ticker.processLatestGER: Fetches the latest GER and delegates processing to the ChainSender.

2. ChainSender Interface

Defines the unified interface for submitting GERs in both operational modes.

// Common methods for both modes

IsGERInjected(ger common.Hash) (bool, error)

ProcessGER(ctx context.Context, ger common.Hash) error

// Direct injection mode

InjectGER(ctx context.Context, ger common.Hash) error

// Committee mode specific

ProposeGER(ctx context.Context, ger common.Hash) error

IsGERProposed(ger common.Hash) (bool, error)

3. EVMChainGERSender

Implements ChainSender using Ethereum clients and transaction management, supporting both operational modes.

Mode Selection:

- Direct Injection Mode: When

EnableAggOracleCommittee = false - Committee Mode: When

EnableAggOracleCommittee = trueandAggOracleCommitteeAddris configured

Functions:

Common Functions:

IsGERInjected: Verifies GER presence in the L2 GER Manager contract.ProcessGER: Routes to eitherInjectGERorProposeGERbased on operational mode.

Direct Injection Mode:

InjectGER: Directly submits the GER usinginsertGlobalExitRootand monitors transaction status.

Committee Mode:

ProposeGER: Proposes the GER to the AggOracleCommittee usingproposeGlobalExitRoot.IsGERProposed: Checks if the current committee member has already proposed the GER.

Validation:

- Direct Mode: Validates that the sender address is authorized as

GlobalExitRootUpdater - Committee Mode: Validates that the sender is a registered committee member

Smart Contract Integration

1. L2 GER Manager Contract

Used in both operational modes for final GER storage and status checking.

- Contract:

GlobalExitRootManagerL2SovereignChain.sol - Key Functions:

insertGlobalExitRoot: Final GER injection (called directly in Direct Mode, or by committee contract in Committee Mode)GlobalExitRootMap: Check if a GER is already injectedGlobalExitRootUpdater: Get authorized updater address (for Direct Mode validation)

- Source Code: zkevm-contracts

- Bindings: Available in cdk-contracts-tooling

2. AggOracleCommittee Contract

Used exclusively in Committee Mode for consensus-based GER proposals.

⚠️ Implementation Note: The client-side code only handles proposal submission via

proposeGlobalExitRoot. The consensus mechanism, quorum handling, and automatic GER injection are handled at the smart contract level. Refer to the actual AggOracleCommittee.sol contract for complete implementation details.

-

Contract:

AggOracleCommittee.sol -

Key Functions (as defined in current interface):

proposeGlobalExitRoot: Submit GER proposal (called via transaction)GetAggOracleMemberIndex: Validate committee membershipAddressToLastProposedGER: Track last proposal by each member

-

Additional Functions (used in implementation but not in interface):

AggOracleMembers: Get committee member information (used in validation)ProposedGERToReport: Get proposal status for a specific GER

-

Initialization Parameters:

- Committee Members: Array of authorized oracle addresses

- Quorum: Minimum proposals/votes required for consensus (contract-level implementation)

-

Bindings: Available in cdk-contracts-tooling

Configuration

Direct Injection Mode Configuration

AggOracle:

TargetChainType: "EVM"

URLRPCL1: "https://eth-mainnet.g.alchemy.com/v2/your-api-key"

WaitPeriodNextGER: "5s"

EnableAggOracleCommittee: false

EVMSender:

GlobalExitRootL2: "0x123...abc" # L2 GER Manager contract address

AggOracleCommitteeAddr: "0x000...000" # Not used in direct mode

GasOffset: 80000

WaitPeriodMonitorTx: "1s"

EthTxManager:

FrequencyToMonitorTxs: "1s"

WaitTxToBeMined: "2m"

# ... other EthTxManager config

Committee Mode Configuration

AggOracle:

TargetChainType: "EVM"

URLRPCL1: "https://eth-mainnet.g.alchemy.com/v2/your-api-key"

WaitPeriodNextGER: "5s"

EnableAggOracleCommittee: true

EVMSender:

GlobalExitRootL2: "0x123...abc" # L2 GER Manager contract address

AggOracleCommitteeAddr: "0x456...def" # AggOracleCommittee contract address

GasOffset: 80000

WaitPeriodMonitorTx: "1s"

EthTxManager:

FrequencyToMonitorTxs: "1s"

WaitTxToBeMined: "2m"

# Ensure the From address is a committee member

# ... other EthTxManager config

Key Configuration Differences

| Configuration | Direct Injection Mode | Committee Mode |

|---|---|---|

EnableAggOracleCommittee | false | true |

AggOracleCommitteeAddr | Not required | Required (valid contract address) |

EthTxManager.From | Must be authorized as GlobalExitRootUpdater | Must be a registered committee member |

| GER Submission | Direct via insertGlobalExitRoot | Proposal via proposeGlobalExitRoot |

📊 Aggoracle Metrics

The Aggoracle service exposes Prometheus metrics to track Global Exit Root (GER) processing activity, latency, and error rates.

All metrics are registered under the namespace: aggoracle

| Metric | Type | Description | Unit |

|---|---|---|---|

aggoracle_ger_processing_trigger_total | Counter | Total number of GER processing triggers. | count |

aggoracle_ger_processing_errors_total | Counter | Total number of GER processing errors. | count |

aggoracle_ger_processing_duration_seconds | Histogram | Time taken to process a single Global Exit Root from start to finish. | seconds |

Summary

The AggOracle component automates the propagation of GERs from L1 to L2, enabling bridging across networks. It supports two operational modes:

- Direct Injection Mode: Simple, single-authority GER injection

- Committee Mode: Consensus-based GER injection providing enhanced security through multiple oracle validation

Refer to the EVM implementation in evm.go for guidance on building chain senders for non-EVM chains.

AggSender Component

AggSender is responsible for building and packing the information required to prove a target chain’s bridge state into a certificate. This certificate provides the inputs needed to build a proof that is eventually going to be settled on L1 via the agglayer.

The AggSender consists of a multisig committee, where one participant acts as the proposer, and the remaining members act as validators.

The proposer is responsible for building and signing the certificate, and propagating it to the validators for verification via gRPC. Each validator independently validates the proposed certificate and returns a signature to the proposer if the validation is successful.

Proposer will pack each signature (including its own) in the certificate, and send it to agglayer for settlement.

The multisig committee is registered on the rollup contract on L1. It contains a list of signers, each represented by an Ethereum address and a URL. It is important that when initializing the rollup contract:

- the first signer in the list corresponds to the

AggSender proposer. For the proposer, the url parameter may be omitted (as it is not used for validation requests). - the remaining signers represent

AggSender validators, and their url fields must be properly set, as these endpoints are used to send certificate validation requests via gRPC.

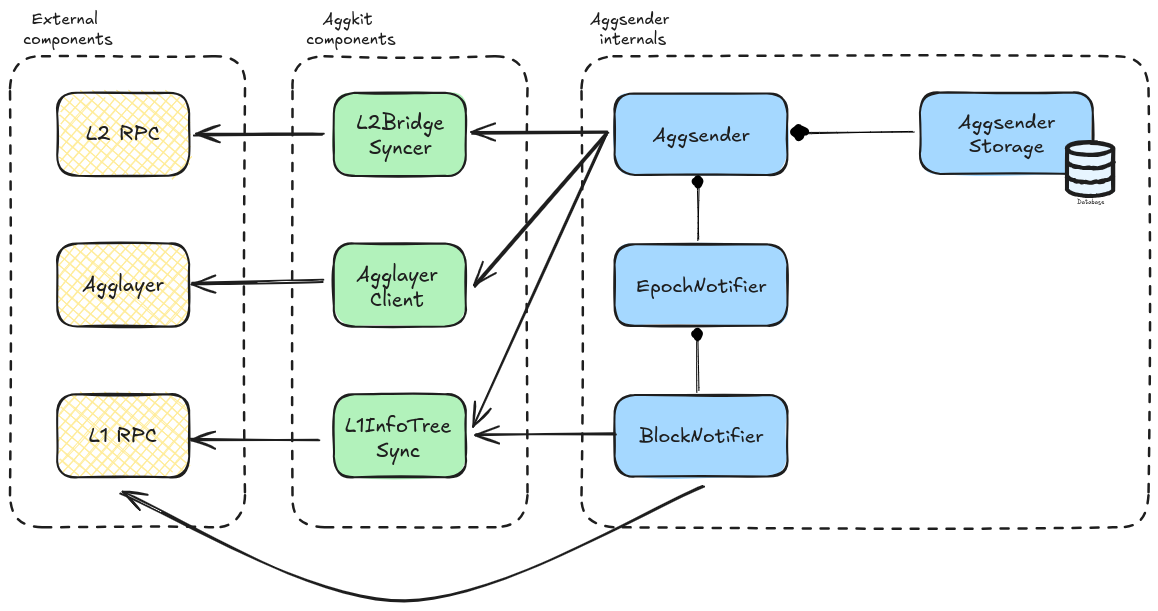

Component Diagram

The image below depicts the Aggsender components (the editable link of the diagram is found here).

Flow

Starting the AggSender

Aggsender gets the epoch configuration from the Agglayer. It checks the last certificate in DB (if exists) against the Agglayer, to be sure that both are on the same page:

- If the DB is empty then get, as starting point, the last certificate

Agglayerhas. - If it is a fresh start, and there are no certificates before this, it will set its starting block to 1 and start polling bridges and claims from the syncer from that block.

- If

Aggsenderis not on the same page asAgglayerit will log error and not proceed with the process of building new certificates, because this case means that there was another player involved that sent a certificate in place of theAggsenderwhich is an invalid case sinceAggsenderis a single instance per L2 network. It can also happen if we put a differentAggsenderdb (from a different network). - If both

AggsenderandAgglayerhave the same certificate, thenAggsenderwill start the certificate monitoring and build process since this is a valid use case.

sequenceDiagram

participant Agglayer

participant Aggsender Proposer

participant Aggsender Validator 1

participant Aggsender Validator N

Aggsender Proposer->>Agglayer: Read epoch configuration

Aggsender Proposer->>Agglayer: Read latest known certificate

Aggsender Proposer-->>Aggsender Proposer: Wait for an epoch

Aggsender Proposer-->>Aggsender Proposer: Build certificate

Aggsender Proposer->>Aggsender Validator 1: Validate certificate

Aggsender Proposer->>Aggsender Validator N: Validate certificate

Aggsender Validator 1-->>Aggsender Proposer: Return signature if valid

Aggsender Validator N-->>Aggsender Proposer: Return signature if valid

Aggsender Proposer->>Agglayer: Send certificate

PessimisticProof Mode

Aggsender will wait until the epoch event is triggered and ask the L2BridgeSyncer if there are new bridges and claims to be sent to Agglayer. Once we reach the moment in epoch when we need to send a certificate, the Aggsender will poll all the bridges and claims from the bridge syncer, based on the last sent L2 block to the Agglayer, until the block that the syncer has.

It is important to mention that no certificate will be sent to the Agglayer if the syncer has no bridges, since bridges change the Local Exit Root (LER).

If we have bridges, certificate will be built, signed, and sent to the Agglayer using the provided Agglayer RPC URL.

Currently, Agglayer only supports one certificate per L1 epoch, per network, so we can not send more than one certificate. After the certificate is sent, we wait until the next epoch, either to resend it if its status is InError, or to build a new one if its status Settled. Also, we have no limit yet in how many bridges and claims can be sent in a single certificate. This might be something to test and check, because certificates carry a lot of data through RPC, so we might hit the rpc layer limit at some point. For this reason, we introduced the MaxCertSize configuration parameter on the Aggsender, where the user can define the maximum size of the certificate (based on the rpc communication layer limit) in bytes, and the Aggsender will limit the number of bridges and claims it will send to the Agglayer based on this parameter. Since both bridges and claims carry fixed size of data (each field is a fixed size field), we can we great precision calculate the size of a certificate.

InError status on a certificate can mean a number of things. It can be an error that happened on the Agglayer. It can be an error in the data Aggsender sent, or the certificate was sent in between two epochs, which Agglayer considers invalid. Either way, the given certificate needs to be re-sent in the next epoch (or immediately after we notice its status change based on the RetryCertAfterInError config parameter), with all the previously sent bridges and claims, plus the new ones that happened after them, that the syncer saw and saved.

It is important to mention that, in the case of resending the certificate, the certificate height must be reused. If we are sending a new certificate, its height must be incremented based on the previously sent certificate.

Suppose the previously sent certificate was not marked as InError, or Settled on the Agglayer. In that case, we can not send/resend the certificate, even though a new epoch event is handled since it was not processed yet by the Agglayer (neither Settled nor marked as InError).

The image below depicts the interaction between different components when building and sending a certificate to the Agglayer in the PessimisticProof mode.

sequenceDiagram

participant User

participant L1RPC as L1 Network

participant L2RPC as L2 Network

participant Bridge as Bridge Smart Contract

participant AggLayer

participant L2BridgeSyncer

participant L1InfoTreeSync

participant AggSender

User->>L1RPC: bridge (L1->L2)

L1RPC->>Bridge: bridgeAsset

Bridge->>AggLayer: updateL1InfoTree

Bridge->>Bridge: auto claim

User->>L2RPC: bridge (L2->L1)

L2RPC->>L2BridgeSyncer: bridgeAsset emits bridgeEvent

User->>L2RPC: claimAsset emits claimEvent

L2RPC->>L1InfoTreeSync: index claimEvent

AggSender->>AggSender: wait for epoch to elapse

AggSender->>L1InfoTreeSync: check latest sent certificate

AggSender->>L2BridgeSyncer: get published bridges

AggSender->>L2BridgeSyncer: get imported bridge exits

Note right of AggSender: generate a Merkle proof for each imported bridge exit

AggSender->>L1InfoTreeSync: get l1 info tree merkle proof for imported bridge exits

AggSender->>AggLayer: send certificate

AggchainProof Mode

In essence, the AggchainProof mode follows the same logic and flow as PessimisticProof mode. Only difference is in two points:

- Calling the

aggchain proverto generate anaggchain proofthat will be sent in the certfiicate to theAgglayer. - Resending an

InErrorcertficate does not expand it with new bridges and events that the syncer might have gotten in the meantime. This is done becauseaggchain proveralready generated a proof for a given block range, and since proof generation can be a long process, this is a small optimization. - Note that this might change in the future.

Calling the aggchain prover is done right before signing and sending the certificate to the Agglayer. To generate an aggchain proof prover needs couple of things:

- Block range on L2 for which we are trying to generate a certificate.

- Finalized L1 info tree root, leaf, and proof on the L1 info tree. Basically, this is the latest finalized l1 info tree root needed by the prover to generate the proof. This root is also use to generate merkle proof for every imported bridge exit (claim) in certificate.

- Injected GlobalExitRoot’s on L2 and their leaves and proofs. Merkle proofs of the injected GERs are calculated based on the finalized L1 info tree root.

- Imported bridge exits (claims) we intend to include in the certificate for the given block range.

The image below depicts the interaction between different components when building and sending a certificate to the Agglayer in the AggchainProof mode.

sequenceDiagram

participant User

participant L1RPC as L1 Network

participant L2RPC as L2 Network

participant Bridge as Bridge Smart Contract

participant AggLayer

participant L2BridgeSyncer

participant L1InfoTreeSync

participant AggSender

participant AggchainProver

User->>L1RPC: bridge (L1->L2)

L1RPC->>Bridge: bridgeAsset

Bridge->>AggLayer: updateL1InfoTree

Bridge->>Bridge: auto claim

User->>L2RPC: bridge (L2->L1)

L2RPC->>L2BridgeSyncer: bridgeAsset emits bridgeEvent

User->>L2RPC: claimAsset

L2RPC->>L1InfoTreeSync: claimEvent

AggSender->>AggSender: wait for epoch to elapse

AggSender->>L1InfoTreeSync: check latest sent certificate

AggSender->>L2BridgeSyncer: get published bridges

AggSender->>L2BridgeSyncer: get imported bridge exits

AggSender->>L1InfoTreeSync: get finalized l1 info tree root

AggSender->>L2RPC: get injected GERs

Note right of AggSender: generate a Merkle proof for each injected GER

AggSender->>L1InfoTreeSync: get l1 info tree merkle proof for injected GERs

AggSender->>AggchainProver: generate aggchain proof

Note right of AggSender: generate a Merkle proof for each imported bridge exit

AggSender->>L1InfoTreeSync: get l1 info tree merkle proof for imported bridge exits

AggSender->>AggLayer: send certificate

Certificate Data

The certificate is the data submitted to Agglayer. Must be signed to be accepted by Agglayer. Agglayer responds with a certificateID (hash)

| Field Name | Description |

|---|---|

network_id | This is the id of the rollup (>0) |

height | Order of certificates. First one is 0 |

prev_local_exit_root | The first one must be the one in smart contract (currently is a 0x000…00) |

new_local_exit_root | It’s the root after bridge_exits |

bridge_exits | These are the leaves of the LER tree included in this certificate. (bridgeAssert calls) |

imported_bridge_exits | These are the claims done in this network |

aggchain_params | Aggchain params returned by the aggchain prover |

aggchain_proof | Aggchain proof generated by the aggchain prover |

custom_chain_data | Custom chain data returned by the aggchain prover |

Configuration

| Name | Type | Description |

|---|---|---|

| StoragePath | string | Full file path (with file name) where to store Aggsender DB |

| AgglayerClient | *aggkitgrpc.ClientConfig | Agglayer gRPC client configuration. |

| AggsenderPrivateKey | SignerConfig | Configuration of the signer used to sign the certificate on the Aggsender before sending it to the Agglayer. It can be a local private key, or an external one. |

| URLRPCL2 | string | L2 RPC |

| BlockFinality | string | Indicates which finality the AggLayer follows (FinalizedBlock, SafeBlock, LatestBlock, PendingBlock) you can add an offset e.g: “FinalizedBlock/20” or “FinalizedBlock/-20” |

| TriggerCertMode | string | Mode used to trigger certificate sending. Options: “EpochBased”, “NewBridge”, “ASAP”, “Auto” (default: “Auto”) |

| TriggerEpochBased | TriggerEpochBasedConfig | Configuration for EpochBased trigger mode (used when TriggerCertMode is “EpochBased”) |

| TriggerASAP | TriggerASAPConfig | Configuration for ASAP trigger mode (used when TriggerCertMode is “ASAP”) |

| MaxRetriesStoreCertificate | int | Number of retries if Aggsender fails to store certificates on DB. 0 = infinite retries |

| DelayBetweenRetries | Duration | Delay between retries for storing certificate and initial status check |

| MaxCertSize | uint | The maximum size of the certificate. 0 means infinite size |

| DryRun | bool | If true, AggSender will not send certificates to Agglayer (for debugging) |

| EnableRPC | bool | Enable the Aggsender’s RPC layer |

| AggkitProverClient | *aggkitgrpc.ClientConfig | Configuration for the AggkitProver gRPC client |

| Mode | string | Defines the mode of the AggSender (PessimisticProof or AggchainProof) |

| CheckStatusCertificateInterval | Duration | Interval at which the AggSender will check the certificate status in Agglayer |

| RetryCertAfterInError | bool | If true, Aggsender will re-send InError certificates immediately after status change |

| MaxSubmitCertificateRate | RateLimitConfig | Maximum allowed rate of submission of certificates in a given time. |

| GlobalExitRootL2Addr | Address | Address of the GlobalExitRootManager contract on L2 sovereign chain (needed for AggchainProof mode) |

| SovereignRollupAddr | Address | Address of the sovereign rollup contract on L1 |

| RequireStorageContentCompatibility | bool | If true, data stored in the database must be compatible with the running environment |

| RequireNoFEPBlockGap | bool | If true, AggSender should not accept a gap between lastBlock from lastCertificate and first block of FEP |

| OptimisticModeConfig | optimistic.Config | Configuration for optimistic mode (required by FEP mode). |

| RequireOneBridgeInPPCertificate | bool | If true, AggSender requires at least one bridge exit for Pessimistic Proof certificates |

| MaxL2BlockNumber | uint64 | Set the last block to be included in a certificate (0 = disabled) |

| StopOnFinishedSendingAllCertificates | bool | Stop when there are no more certificates to send due to MaxL2BlockNumber |

| StorageRetainCertificatesPolicy | StorageRetainCertificatesPolicy | Configure the certificate retain policy |

| UnsetClaimsMaxLogBlockRange | uint64 | Proactive max block range for eth_getLogs queries when fetching unset claims. 0 means disabled (fallback to reactive chunking on error) |

StorageRetainCertificatesPolicy

The StorageRetainCertificatesPolicy structure configures the certificate retain policy

| Field Name | Type | Description |

|---|---|---|

| RetainCertificatesCount | uint32 | If it is 0, all certificates are stored. If it is greater than 0, it is the number of certificates stored in the DB. The last certificate sent is always saved because it is necessary for proper operation. |

| KeepCertificatesHistory | bool | If true, discarded certificates are moved to the certificate_info_history table instead of being deleted |

TriggerEpochBasedConfig

The TriggerEpochBasedConfig structure configures the epoch-based trigger mode for certificate sending. This configuration is used when TriggerCertMode is set to “EpochBased” (or when “Auto” mode resolves to epoch-based triggering).

| Field Name | Type | Description |

|---|---|---|

| EpochNotificationPercentage | uint | Indicates the percentage of the epoch at which the AggSender should send the certificate. 0 = begin, 50 = middle, 100 = end |

Example:

[AggSender]

TriggerCertMode = "EpochBased"

[AggSender.TriggerEpochBased]

EpochNotificationPercentage = 50

The epoch-based trigger waits for a specific percentage of the epoch to elapse before sending certificates to the Agglayer. This allows for coordinated certificate submission aligned with L1 epoch boundaries.

TriggerASAPConfig

The TriggerASAPConfig structure configures the ASAP (As Soon As Possible) trigger mode for certificate sending. This configuration is used when TriggerCertMode is set to “ASAP”.

| Field Name | Type | Description |

|---|---|---|

| DelayBetweenCertificates | Duration | The delay to wait before sending a new certificate after the previous one is settled |

| MinimumNewCertificateInterval | Duration | The minimum interval between two new certificate triggers (0 = no minimum interval) |

Example:

[AggSender]

TriggerCertMode = "ASAP"

[AggSender.TriggerASAP]

DelayBetweenCertificates = "1s"

MinimumNewCertificateInterval = "1h"

The ASAP trigger sends certificates as soon as possible after the last certificate reaches a final state (settled or in error). The DelayBetweenCertificates parameter adds a configurable delay before sending, while MinimumNewCertificateInterval ensures a minimum time gap between certificate submissions to prevent excessive certificate generation.

Trigger Modes

The TriggerCertMode field supports the following modes:

- EpochBased: Triggers certificate sending based on epoch progression. Uses the

TriggerEpochBasedconfiguration to determine when in the epoch to send certificates. - NewBridge: Triggers certificate sending immediately when new bridge events are detected on L2.

- ASAP: Triggers certificate sending as soon as possible after the last certificate reaches a final state (settled or in error).

- Auto: Automatically selects the appropriate trigger mode based on the AggSender mode:

- PreconfPP mode → NewBridge trigger

- PessimisticProof and AggchainProof modes → EpochBased trigger

OptimisticConfig

The OptimisticConfig structure configures the optimistic mode for the AggSender. This configuration is required when running in FEP (Fast Exit Protocol) mode.

| Field Name | Type | Description |

|---|---|---|

| SovereignRollupAddr | Address | The L1 address of the AggchainFEP contract |

| TrustedSequencerKey | SignerConfig | The private key used to sign optimistic proofs. Must be the trusted sequencer’s key. |

| OpNodeURL | string | The URL of the OpNode service used to fetch aggregation proof public values |

| RequireKeyMatchTrustedSequencer | bool | If true, enables a sanity check that the signer’s public key matches the trusted sequencer address. This ensures the signer is the trusted sequencer and not a random signer. |

Example:

[AggSender]

[AggSender.OptimisticModeConfig]

SovereignRollupAddr = "0x1234..."

TrustedSequencerKey = { Method="local", Path="/opt/private_key.keystore", Password="password" }

OpNodeURL = "http://localhost:8080"

RequireKeyMatchTrustedSequencer = true

The optimistic mode is used in FEP (Fast Exit Protocol) to enable faster exit processing by allowing optimistic proofs to be submitted before full verification. The trusted sequencer is responsible for signing these proofs, and this configuration ensures that only the authorized trusted sequencer can submit proofs.

Use Cases

This paragraph explains different use cases with outcomes:

- No bridges from L2 -> L1 means no certificate will be built.

- Having bridges without claims, means a certificate will be built and sent.

- Having bridges and claims, means a certificate will be built and sent.

- If the previous certificate we sent is

InError, we need to resend that certificate with all the previous sent data, plus new bridges and claims we saw after that. - If the previously sent certificate is not

InErrororSettled, no new certificate will be sent/resent. TheAggSenderwaits for one of these two statuses on theAgglayer.

Debugging in Local with Bats E2E Tests

Preconditions:

- Make sure you have the up to date

aggkit:localDocker image built. In order to build one, runmake build-docker-cicommand. - Run the

bridge_spammerin background (namely make sure that theadditional_serviceshasbridge_spammerprovided).

- Start kurtosis with pessimistic proof (OP stack):

./test/run-local-e2e.sh single-l2-network-op-pessimistic path_to_kurtosis_cdk_repo -Note that the fourth argument corresponds to the e2e repo path. In case you would like to run the set of e2e tests immediately after the kurtosis environment is up and running, you should provide a real path. - After kurtosis is started, stop the

aggkit-001service (kurtosis service stop aggkit aggkit-001). - Open the repo in an IDE (like Visual Studio), and run

./scripts/local_config_ppfrom the main repo folder. This will generate a./tmpfolder in whichAggsenderstorage will be saved, and other aggkit node data, and will print alaunch.json:

{

"version": "0.2.0",

"configurations": [

{

"name": "Debug aggsender",

"type": "go",

"request": "launch",

"mode": "auto",

"program": "cmd/",

"cwd": "${workspaceFolder}",

"args":[

"run",

"-cfg", "tmp/aggkit/local_config/test.kurtosis.toml",

"-components", "aggsender",

]

}

]

}

- Copy this to your

launch.jsonand start debugging. - This will start the

aggkitwith theaggsenderrunning. - Wait for some time, until

bridge_spammerdeposits are indexed by theaggsender. As a result of bridge activity, there should be a certificate, and you can debug the whole process. - Optionally you can run the E2E tests as well, by running the following command and providing the real e2e repo path:

./test/run-local-e2e.sh single-l2-network-op-pessimistic - path_to_e2e_repo

Prometheus Metrics

If enabled in the configuration, Aggsender exposes the following Prometheus metrics:

| Metric Name | Type | Description |

|---|---|---|

aggsender_number_of_certificates_sent | Counter | Number of certificates sent |

aggsender_number_of_certificates_in_error | Counter | Number of certificates in error |

aggsender_number_of_sending_retries | Counter | Number of sending retries |

aggsender_number_of_certificates_settled | Counter | Number of certificates settled |

aggsender_number_of_prover_errors | Counter | Number of prover errors |

aggsender_multisig_threshold_not_reached | Counter | Number of times multisig threshold was not reached |

aggsender_validator_errors_total | Counter (labeled by aggsender_validator) | Total number of errors returned by a validator over time |

aggsender_validator_invalid_signature_total | Counter (labeled by aggsender_validator) | Number of times a validator returned an invalid signature |

aggsender_validate_time | Histogram | Time taken to validate a certificate (seconds) |

aggsender_prover_time | Histogram | Time taken by the prover (seconds) |

aggsender_certificate_settlement_time | Histogram | Time taken to settle a certificate (seconds) |

aggsender_certificate_build_time | Histogram | Time taken to build a certificate (seconds) |

Configuration Example

To enable Prometheus metrics, configure Aggsender as follows:

[Prometheus]

Enabled = true

Host = "localhost"

Port = 9091

With this configuration, the metrics will be available at: http://localhost:9091/metrics

Additional Documentation

- (https://potential-couscous-4gw6qyo.pages.github.io/protocol/workflow_centralized.html)

- Initial PR

- (https://agglayer.github.io/agglayer/pessimistic_proof/index.html)

AggSender Validator Component

The AggsenderValidator is a critical component of the AggKit framework that provides certificate validation services for the AggSender. It ensures that certificates built by the AggSender are correct and valid before they are submitted to the AggLayer.

Conceptual Overview

Purpose

The Aggsender Validator serves as an independent validation layer that verifies the correctness of certificates generated by the AggSender Proposer. It acts as a security gate, ensuring that only properly constructed certificates are submitted to the AggLayer, preventing invalid submissions that could cause issues in the chain . A given validator is part of a committee whose signature acts as a vote for correctness of a new certificate. When a threshold of signatures for a new certificate is reached, a certificate can be accepted by the Agglayer.

Key Functions

- Certificate Validation: Validates the structure, content, and integrity of certificates

- Certificate Signing: Signs valid certificates using a configured signer

- Health Monitoring: Provides health check endpoints for monitoring service status

- gRPC Service: Exposes validation functionality through a gRPC interface

Validation Process

The validator performs comprehensive checks on incoming certificates:

- Certificate Continuity: Verifies certificates are contiguous (no gaps in height)

- Previous Certificate Status: Checks that previous certificates are properly settled

- Certificate Reconstruction: Rebuilds the certificate using data indexed from the L1 and L2 RPCs and compares with incoming certificate. Validators and proposers should use independent RPCs

- Content Verification: Validates that all certificate fields match expected values

Architecture Overview

Components

The AggsenderValidator consists of several key components:

┌─────────────────────────────────────────────────────────────┐

│ AggsenderValidator │

├─────────────────────────────────────────────────────────────┤

│ ┌─────────────────┐ ┌─────────────────┐ │

│ │ gRPC Service │ │ Validation Logic│ │

│ │ │ │ │ │

│ │ - HealthCheck │ │ - CertValidator │ │

│ │ - ValidateCert │ │ - FlowInterface │ │

│ └─────────────────┘ └─────────────────┘ │

│ │

│ ┌─────────────────┐ ┌─────────────────┐ │

│ │ Data Access │ │ Signing │ │

│ │ │ │ │ │

│ │ - L1InfoTree │ │ - Signer │ │

│ │ - CertQuerier │ │ - KeyManagement │ │

│ │ - LERQuerier │ │ │ │

│ └─────────────────┘ └─────────────────┘ │

└─────────────────────────────────────────────────────────────┘

Aggsender Validator as a part of a committee

The Aggsender Validator operates as part of a distributed validator committee that provides security through consensus. This multi-signature approach ensures that certificates are validated by multiple independent parties before being accepted by the AggLayer.

sequenceDiagram

participant AP as AggSender Proposer

participant AV1 as Validator 1

participant AV2 as Validator 2

participant AV3 as Validator 3

participant AVN as Validator N

participant AL as AggLayer

Note over AP: Certificate ready for validation

par Parallel Validation Requests

AP->>AV1: ValidateCertificate(cert, prevCertID)

AP->>AV2: ValidateCertificate(cert, prevCertID)

AP->>AV3: ValidateCertificate(cert, prevCertID)

AP->>AVN: ValidateCertificate(cert, prevCertID)

end

par Independent Validation & Signing

AV1->>AV1: Validate & Sign

AV2->>AV2: Validate & Sign

AV3->>AV3: Validate & Sign

AVN->>AVN: Validate & Sign

end

par Signature Responses

AV1-->>AP: Signature 1 ✓

AV2-->>AP: Signature 2 ✓

AV3-->>AP: Validation Error ✗

AVN-->>AP: Signature N ✓

end

Note over AP: Check if threshold met<br/>(e.g., 3 out of 4 signatures)

alt Threshold Met

AP->>AL: SubmitCertificate(cert + signatures)

AL-->>AP: Certificate ID

else Threshold Not Met

AP->>AP: Reject certificate<br/>Log validation failures

end

Committee Consensus Process

- Certificate Proposal: The AggSender Proposer builds a certificate and submits it to all committee members

- Parallel Validation: Each validator independently validates the certificate using the same validation logic

- Independent Signing: Valid certificates are signed by each validator using their unique private keys

- Signature Collection: The proposer collects signatures from all validators

- Threshold Check: The proposer verifies that enough validators have signed (e.g., 3 out of 4, or 67% majority)

- Certificate Submission: If threshold is met, the certificate with collected signatures is submitted to AggLayer

- Rejection Handling: If threshold is not met, the certificate is rejected and the process logs validation failures

This committee-based approach provides several security benefits:

- Decentralization: No single point of failure in validation

- Consensus: Multiple independent validators must agree on certificate validity

- Fault Tolerance: System continues to operate even if some validators are unavailable

- Security: Malicious or compromised validators cannot unilaterally approve invalid certificates

Core Interfaces

CertificateValidator

The main validation engine that implements the core validation logic:

ValidateCertificate(ctx, params): Main validation method- Checks certificate continuity, and content verification, verifies proof for each claim (imported bridge exit)

ValidatorService (gRPC)

Exposes validation functionality via gRPC:

HealthCheck(): Returns service status and versionValidateCertificate(): Validates and signs certificates

Local vs Remote Validation

The system supports two validation modes:

- LocalValidator: Validates certificates locally without signing

- RemoteValidator: Connects to a remote validation service via gRPC

Validation Modes

Local Validator

- Runs validation logic in the same process as AggSender

- Does not sign certificates (validation only)

- Useful for development and testing

- Direct access to storage and other components

Remote Validator

- Connects to a separate AggsenderValidator service

- Provides full validation and signing capabilities

- Production-ready with proper isolation

- Communicates via gRPC protocol

Requirements & Configuration

Prerequisites

- Go Environment: Go 1.24+

- Database Access: SQLite databases for L1InfoTreeSync and BridgeL2Sync

- L1/L2 Connectivity: Access to L1 and L2 RPC endpoints

- Signer Configuration: Private key for certificate signing

- AggLayer Client: gRPC connection to AggLayer

- Expose gRPC service: Needs to be accessible to Aggsender Proposer

Configuration Parameters

The validator is configured using a .toml file. Check the default values in this file.

| Name | Type | Description |

|---|---|---|

| UnsetClaimsMaxLogBlockRange | uint64 | Proactive max block range for eth_getLogs queries when fetching unset claims. 0 means disabled (fallback to reactive chunking on error) |

Running the Validator

As a Standalone Component

# Run only the validator component

./aggkit run --components aggsender-validator --cfg config.toml

As Part of AggSender

The validator can be integrated into the AggSender flow:

# Run AggSender with validator in PessimisticProof mode

./aggkit run --components aggsender --cfg config.toml

Configuration in AggSender:

[AggSender]

Mode = "PessimisticProof"

RequireValidatorCall = true # Use remote validator

[AggSender.ValidatorClient]

URL = "localhost:50051"

Development Setup

For local development and testing:

- Setup Database: Ensure SQLite databases are accessible

- Configure Components: Set up L1InfoTreeSync and BridgeSync

- Start Dependencies: Run required L1/L2 networks

- Launch Validator: Start the validator service

Integration with AggSender

The validator integrates with AggSender in two ways:

- Local Integration: Embedded validation within AggSender process (used only for development purposes, to validate the certificate using the same logic as Aggsender Validator, but in the Aggsender Proposer itself)

- Remote Integration: Separate validator service accessed via gRPC

Remote Integration Flow

sequenceDiagram

participant AP as AggSender Proposer

participant AV as AggSender Validator

participant AL as AggLayer

AP->>AV: ValidateCertificate(cert, prevCertID)

AV->>AV: Reconstruct certificate

AV->>AV: Compare certificates

AV->>AV: Sign certificate

AV->>AP: Return signature

AP->>AL: Submit signed certificate

gRPC API

The validator exposes a gRPC API defined in proto/v1/validator.proto:

Service Definition

service AggsenderValidator {

rpc HealthCheck(google.protobuf.Empty) returns (HealthCheckResponse);

rpc ValidateCertificate(ValidateCertificateRequest) returns (ValidateCertificateResponse);

}

Methods

HealthCheck

Returns the status and version of the validator service.

Response:

message HealthCheckResponse {

string version = 1; // Version of the validator

string status = 2; // Status (OK, ERROR, etc.)

string reason = 3; // Additional status information

}

ValidateCertificate

Validates a certificate and returns a signature if valid. If not, it returns an error.

Request:

message ValidateCertificateRequest {

agglayer.node.types.v1.CertificateId previous_certificate_id = 1;

agglayer.node.types.v1.Certificate certificate = 2;

uint64 last_l2_block_in_cert = 3;

}

Response:

message ValidateCertificateResponse {

agglayer.interop.types.v1.FixedBytes65 signature = 1;

}

Validation Logic

Certificate Validation Steps

- Null Check: Ensure proposed certificate is not null

- Certificate Continuity: Check height progression and LocalExitRoot continuity

- Previous Certificate Status: Ensure previous certificate is settled

- Certificate Reconstruction: Rebuild certificate using the same building logic the proposer used

- Content Comparison: Compare reconstructed vs. incoming certificate

- Proof Verification: Verifying proofs for each claim (iimported bridge exit)

- Signing: Sign the certificate if validation passes

Key Validation Rules

- Height Continuity: Each certificate height must be previous + 1

- LER Consistency:

PrevLocalExitRootmust match previous certificate’sNewLocalExitRoot - First Certificate: Height 0 must have correct starting LocalExitRoot defined in the rollup contract

- Block Range: Must be contiguous with no gaps

Error Handling

The validator returns specific errors for different validation failures:

ErrNilCertificate: Certificate is null- Certificate height mismatch errors

- LocalExitRoot continuity errors

- Certificate comparison differences

Monitoring & Debugging

Health Checks

The validator provides health check endpoints for monitoring:

# Check validator health via gRPC

grpcurl -plaintext localhost:50051 aggkit.aggsender.validator.v1.AggsenderValidator/HealthCheck

Logging

The validator provides detailed logging for debugging:

- Certificate validation steps

- Comparison differences between certificates

- Error details and stack traces

- Performance metrics

Common Issues

- Certificate Height Gaps: Ensure continuous certificate submission

- LocalExitRoot Mismatches: Verify bridge synchronization is correct

- Signing Failures: Verify signer configuration and key access

- gRPC Connection Issues: Check network connectivity and service status

Best Practices

- Use Remote Validation: For production environments, use remote validator service

- Monitor Health: Implement regular health checks and alerting

- Secure Keys: Use proper key management for signing certificates

- Backup Storage: Ensure L2 bridge and L1InfoTree syncers storage is backed up

- Performance Monitoring: Monitor validation times and resource usage

- Error Handling: Implement proper retry logic for transient failures

Examples

Basic Validation Call

// Create validator

validator := validator.NewAggsenderValidator(

logger, flowPP, l1InfoTreeQuerier, certQuerier, lerQuerier)

// Validate certificate

params := types.VerifyIncomingRequest{

Certificate: cert,

PreviousCertificate: prevCert,

LastL2BlockInCert: blockNumber,

}

err := validator.ValidateCertificate(ctx, params)

if err != nil {

log.Errorf("Validation failed: %v", err)

return err

}

Remote Validator Client

// Create remote validator client

remoteValidator, err := validator.NewRemoteValidator(

grpcConfig, storage)

if err != nil {

return err

}

// Validate and sign certificate

signature, err := remoteValidator.ValidateAndSignCertificate(

ctx, certificate, lastL2Block)

if err != nil {

log.Errorf("Remote validation failed: %v", err)

return err

}

Bridge service component

The bridge service abstracts interaction with the unified LxLy bridge. It represents decentralized indexer, that sequences the bridge data. Each bridge service sequences L1 network and a dedicated L2 one (which is uniquely defined by the network id parameter). Therefore, each agglayer connected chain runs its own bridge service. It is implemented as a JSON RPC service.

Bridge flow

Bridge flow L2 -> L2

The diagram below describes the basic L2 -> L2 bridge workflow.

sequenceDiagram

participant User

participant L2 (A)

participant Aggkit (A)

participant AggLayer

participant L2 (B)

participant Aggkit (B)

participant L1

User->>L2 (A): Bridge assets to L2 (B)

L2 (A)->>L2 (A): Index bridge tx & updates the local exit tree

Aggkit (A)->>AggLayer: Build & send certificate (Aggsender)

AggLayer->>L1: Settle batch

L1->>L1: update GER

Note right of L1: rollupmanager updates the GER & RER (PolygonZKEVMGlobalExitRootV2.sol)

AggLayer-->>L2 (A): L1 tx hash

Aggkit (A)->>L1: Aggoracle fetches last finalized GER from L1

Aggkit (A)->>L2 (A): Aggoracle injects the GER on L2 (A) GlobalExitRootManagerL2SovereignChain.sol

Aggkit (B)->>L1: Aggoracle fetches last finalized GER from L1

Aggkit (B)->>L2 (B): Aggoracle injects the GER on L2 (B) GlobalExitRootManagerL2SovereignChain.sol

User->>Aggkit (A): Call bridge_l1InfoTreeIndexForBridge endpoint on the origin network(A)

Aggkit (A)-->>User: Returns L1InfoTree index X for which the bridge was included

loop Poll destination network, until `L1InfoTreeLeaf` is retrieved

User->>Aggkit (B): Poll bridge_injectedInfoAfterIndex on destination network L2(B) until a non-null response.

Aggkit (B)-->>User: Returns the first L1InfoTreeLeaf(GER=Y) for the GER injected on L2(B) at or after L1InfoTree index X

end

User->>Aggkit (A): Call bridge_getProof on origin network(A) to generate merkle proof for bridge using l1InfoTreeIndex of GER Y and networkID(A)

Aggkit (A)-->>User: Return claim proof

User->>L2 (B): Claim (proof)

L2 (B)->>L2 (B): Send claim tx<br/>(bridge is settled on the L2 (B))

L2 (B)-->>User: Tx hash

Bridge flow L1 -> L2

The diagram below describes the basic L1 -> L2 bridge workflow.

sequenceDiagram

participant User

participant L1

participant Aggkit

participant L2

User->>L1: Bridge assets to L2

L1->>L1: Updates the mainnet exit tree

L1->>L1: Update GER

Note right of L1: bridgeContract updates the GER<br/>only if `forceUpdateGlobalExitRoot` is true in the bridge transaction.

Aggkit->>L1: Aggoracle fetches last finalized GER

Aggkit->>L2: Aggoracle injects the GER on L2 GlobalExitRootManagerL2SovereignChain.sol

User->>Aggkit: Call bridge_l1InfoTreeIndexForBridge endpoint on the origin network

Aggkit-->>User: Returns L1InfoTree index X for which the bridge was included

loop Poll destination network, until `L1InfoTreeLeaf` is retrieved

User->>Aggkit: Poll bridge_injectedInfoAfterIndex on destination network (L2) until a non-null response.

Aggkit-->>User: Returns the first L1InfoTreeLeaf(GER=Y) for the GER injected on L2 at or after L1InfoTree index X

end

User->>Aggkit: Call bridge_getProof on origin network to generate merkle proof for bridge using l1InfoTreeIndex of GER Y and networkID=0 (L1)

Aggkit-->>User: Return claim proof

User->>L2: Claim (proof)

L2->>L2: Send claimAsset/claimBridge tx on the destination network<br/>(bridge is settled on the L2)

L2-->>User: Tx hash

Notes:

-

In CDK-Erigon, the Global Exit Root (GER) on the L2 smart contract (

PolygonZKEVMGlobalExitRootL2.sol) is automatically updated by the sequencer. In a sovereign chain, the GER is injected on L2 (GlobalExitRootManagerL2SovereignChain.sol) by the Aggoracle component. -

A non-null response from

bridge_injectedInfoAfterIndexindicates that the bridge is ready to be claimed on the destination network. -

If

forceUpdateGlobalExitRootis set to false in a bridge transaction, the GER will not be updated with that transaction. The user must wait until the GER is updated by another bridge transaction before claiming. This is done to save gas costs while bridging.

Bridge flow L2 -> L1

The diagram below describes the basic L2 -> L1 bridge workflow.

sequenceDiagram

participant User

participant L2

participant Aggkit

participant AggLayer

participant L1

User->>L2: Bridge assets to L1

L2->>L2: Index bridge tx & updates the local exit tree

Aggkit->>AggLayer: Build & send certificate (Aggsender)

AggLayer->>L1: Settle batch

L1->>L1: update GER

Note right of L1: rollupmanager updates the GER & RER (PolygonZKEVMGlobalExitRootV2.sol)

AggLayer-->>L2: Return L1 tx hash

Aggkit->>L1: Fetch last finalized GER (Aggoracle)

Aggkit->>L2: Aggoracle injects GER on L2 (GlobalExitRootManagerL2SovereignChain.sol)

User->>Aggkit: Query bridge_l1InfoTreeIndexForBridge endpoint on the origin network(L2)

Aggkit-->>User: Returns L1InfoTree index X for which the bridge was included

loop Poll destination network, until `L1InfoTreeLeaf` is retrieved

User->>Aggkit: Poll bridge_injectedInfoAfterIndex on destination network (L1) until a non-null response.

Aggkit-->>User: Returns the first L1InfoTreeLeaf(GER=Y) for the GER injected at or after L1InfoTree index X

end

Aggkit-->>User: Return claim proof

User->>L1: Claim (proof)

L1->>L1: Send claimAsset/claimBridge tx on the destination network<br/>(bridge is settled on the L1)

L1-->>User: Tx hash

Indexers

The bridge service relies on specific data located on different chains (such as bridge, claim, and token mapping events, as well as the L1 info tree). These data are retrieved using indexers. Indexers consists of three components: driver, downloader and processor.

Driver

Driver is in charge of retrieving the blocks and also monitors for the reorgs (using the reorg detector component). The idea is to have driver implementation per chain type (so far we have the EVM driver, but in future, each non-evm chain would require a new driver implementation).

Downloader

Downloader is in charge of parsing the blocks and logs that are retrieved by the driver. Downloader (indirectly, via the driver) passes the parsed data to the processor.

Processor

Processor represents the persistance layer, which writes retrieved indexer data in a format suitable for serving it via API. It utilizes SQL lite database.

The diagram below depicts the interaction between components of each indexer.

sequenceDiagram

participant Driver

participant Downloader

participant Processor

Driver->>Driver: Fetch blocks in a loop

Driver->>Driver: Monitor reorgs & finalization

Driver-->>Downloader: Send finalized blocks & logs

Downloader->>Downloader: Parse blocks & event logs

Downloader-->>Processor: Send parsed data

Processor->>Processor: Persist data in SQLite DB

Syncers

In this paragraph, we will list and briefly describe syncers that are of interest for the bridge service.

L1 Info Tree Sync

It interacts with L1 execution layer (via RPC) in order to:

- Sync the L1 info tree,

- Generate merkle proofs,

- Build the relation

bridge <-> L1InfoTree indexfor bridges originated on L1 - Sync the rollup exit tree (namely a tree consisted of all local exit trees, that tracks exits per rollup network), persist, generate proofs

Bridge Sync

It interacts with the L2 or L1 execution layer (via RPC) in order to:

- Sync bridges, claims and token mappings. Needs to be modular as it’s execution client specific.

- Build the local exit tree

- Generate merkle proofs

Bridging custom ERC20 token

When a non-native ERC20 token, not yet mapped on a destination network, is bridged, its representation is deployed on the destination network using the CREATE2 opcode. The mapping process emits the NewWrappedToken event on the destination network.

Mapped token details are available via the bridge_getTokenMappings endpoint.

The following diagram depicts the basic flow of bridging the custom ERC20 token.

sequenceDiagram

participant User

participant OriginERC20 as Origin ERC20 Token

participant OriginBridge as Origin Bridge Contract

participant DestIndexer as Destination Bridge Indexer

participant DestBridge as Destination Bridge Contract

%% Step 1: Approve Transaction

User->>OriginERC20: approve(amount)

Note right of OriginERC20: User authorizes bridge to transfer tokens

%% Step 2: Call Bridge Asset

User->>OriginBridge: bridgeAsset(amount, destinationNetwork)

OriginBridge-->>User: Transaction receipt (bridge asset event emitted)

%% Step 3: Indexing on Destination

DestIndexer-->>OriginBridge: Polls for bridge asset event

OriginBridge-->>DestIndexer: Emits bridge asset event

Note right of DestIndexer: Indexes bridge asset transaction

%% Step 4: Polling for Claim Readiness

loop Poll until ready for claim

User->>DestIndexer: Is bridge ready for claim?

DestIndexer-->>User: Not ready yet / Ready signal

end

%% Step 5: Claim Bridge on Destination

User->>DestBridge: claimBridge(leafValue, proofLocalExitRoot, proofRollupExitRoot)

Note right of DestBridge: `leafValue` consists of bridge data <br/> (e.g. globalIndex, originNetwork, originTokenAddress, <br/>destinationNetwork, destinationAddress etc.)

DestBridge-->>DestBridge: Deploys wrapped token

DestBridge-->>DestBridge: Performs token mapping

DestBridge-->>DestBridge: Mints wrapped token to the destination address

%% Step 6: Final Transaction Hash to User

DestBridge-->>User: Transaction hash (wrapped token deployed and tokens minted to the destination address)

Note right of User: Bridge process completed successfully

Prometheus Metrics

The bridge service exposes several Prometheus metrics to track the number of handled requests and their latencies for different API endpoints. These metrics help monitor service performance, request volume, and latency distribution across various handlers. Each handler is described with a unique handler id and these are the values, depending of what data they are providing:

get_bridges,get_claims,get_token_mappings,get_legacy_token_migrations,l1_info_tree_index_for_bridge,injected_info_after_index,claim_proof,last_reorg_event,get_sync_status,health_check,

| Metric Name | Type | Description |

|---|---|---|

bridge_total_requests | CounterVec | Total number of requests handled per endpoint (handler_id) and HTTP status code (status_code). |

bridge_request_latency_seconds | HistogramVec | Latency of requests in seconds, recorded per endpoint (handler_id). Useful for analyzing request duration distributions. |

Usage Notes

All metrics are counters, meaning they only increase over time. Each metric helps monitor usage and performance of its corresponding API endpoint.

API Documentation

EthTxManager

EthTxManager is responsible for managing transactions

EthTxManager Configuration

| Parameter | Type | Description | Example/Default |

|---|---|---|---|

FrequencyToMonitorTxs | duration | Frequency to monitor pending transactions. | "1s" |

WaitTxToBeMined | duration | Wait time before retrying mining confirmation. | "2s" |

GetReceiptMaxTime | duration | Max wait time for getting transaction receipt. | "250ms" |

GetReceiptWaitInterval | duration | Interval between retries for fetching receipt. | "1s" |

PrivateKeys | array | List of private key configurations (keystore path + password). | [ { Path = "/app/keystore/claimsponsor.keystore", Password = "testonly" } ] |

ForcedGas | uint64 | Fixed gas value override (0 = no override). | 0 |

GasPriceMarginFactor | float64 | Gas price multiplier margin. | 1.0 |

MaxGasPriceLimit | uint64 | Maximum gas price allowed for sending. | 0 |

StoragePath | string | Path to EthTxManager’s local database. | "/tmp/aggkit/ethtxmanager-claimsponsor.sqlite" |

ReadPendingL1Txs | bool | Whether to read pending L1 transactions. | false |

SafeStatusL1NumberOfBlocks | uint64 | Number of blocks to consider a transaction safe. | 5 |

FinalizedStatusL1NumberOfBlocks | uint64 | Number of blocks to consider a transaction finalized. | 10 |

Etherman

Etherman handles the communication with the network.

Etherman Configuration

| Parameter | Type | Description | Example/Default |

|---|---|---|---|

URL | string | JSON-RPC URL for the network. | |

MultiGasProvider | bool | Use multiple gas providers if true. | false |

L1ChainID | uint64 | The Chain ID of the network to which transactions will be sent. Note: This can be either the L1 or L2 Chain ID. | |

HTTPHeaders | array | Custom HTTP headers to add to RPC calls. | [] |

Note: If the L1ChainID field is set to 0, Etherman will automatically determine and populate the correct Chain ID at runtime, provided that a valid JSON-RPC URL is supplied.

Release lifecycle

This document presents the Aggkit Software release lifecycle. The Aggkit team has adopted a process grounded in industry-standard best practices to avoid reinventing the wheel and, more importantly, to prevent confusion among new developers and users. By adhering to these widely recognized practices, we ensure that anyone in the industry can intuitively understand and follow our internal procedures with minimal explanation.

Versioning

The versioning process follows the standard Semantic Versioning to tag new versions

Summary

- MAJOR version when you make incompatible API changes

- MINOR version when you add functionality in a backward compatible manner

- PATCH version when you make backward compatible bug fixes

At this time the project is in development phase so refer to the FAQ for the current versioning criteria:

How should I deal with revisions in the 0.y.z initial development phase?

The simplest thing to do is start your initial development release at 0.1.0 and then increment the minor version for each subsequent release.

How do I know when to release 1.0.0?

If your software is being used in production, it should probably already be 1.0.0. If you have a stable API on which users have come to depend, you should be 1.0.0. If you’re worrying a lot about backward compatibility, you should probably already be 1.0.0.

Pre-Releases

Refer to the Software release lifecycle Wikipedia article for a definition and criteria this project is following regarding pre-releases.

Release process

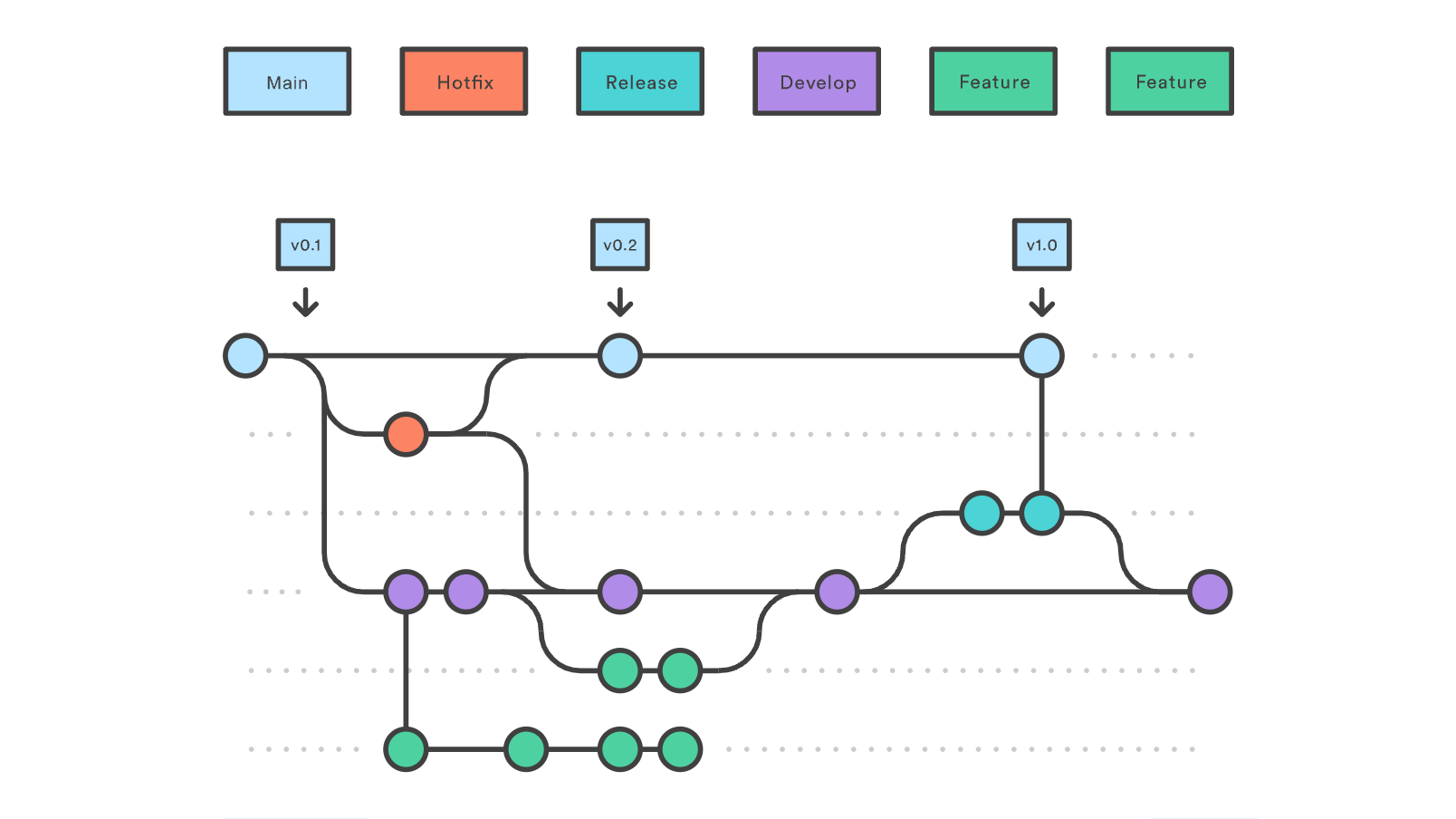

The release process is based on the Gitflow workflow for managing the source code repository.

For a quick reference you can check https://cheatography.com/mikesac/cheat-sheets/gitflow/

As a quick reference this is the diagram of the branching cycle:

FAQ

Should I cherry pick commits made to a release branch while it’s still unmerged?

As stated by the Gitflow workflow, release branches should be short-lived and merged back to main and develop branches, but it can happen from time to time that develop branch needs a commit from a release branch before it’s released.

In that case, a cherry-pick commit can be merged into develop containing the desired changes, as they would have end-up in develop at some point in the future anyway.

How do we manage several developments in parallel?

Sometimes there’s a necessity to release a new stable version of the previous branch with certain features while simultaneously working on the next version. In that case, we’ll maintain two release branches like release/4.0.0 and release/5.0.0. These branches will evolve in parallel, but most of the changes from the lower release will need to be cherry-picked onto the newest release. Additionally, if any critical fix is made to the newest release, it should be back-ported to the older release.

How to create a hotfix for an older release?

When a release branch is merged into main and develop, it is removed, and only the tag is left. To create a hotfix release, a new release branch will be created from the tag so the necessary fixes can be applied. Then follow the normal release cycle: create a new beta for the release, test it in all environments, then create the final tag and release it.

The fixes may need to be cherry-picked into any open release branches.

Why we should not squash merge when merging a release branch to main or develop ?

This is opinionated but in general there’s quite a lot of downsides when squash merging release branches, see this response for some of them https://stackoverflow.com/questions/41139783/gitflow-should-i-squash-commits-when-merging-from-a-release-branch-into-master/41298098#41298098

Another big downside is that main and develop branch will distance more and more in terms of commits as time passes, making them totally different after some time.

Reference

Comparison of popular branching strategies https://docs.aws.amazon.com/prescriptive-guidance/latest/choosing-git-branch-approach/git-branching-strategies.html

End-to-end tests

This document enumerates and summarizes the e2e tests. The tests are implemented using Bats framework and are assuming there is a running cluster to run them against. They are placed in the test/bats folder and divided into two major categories:

- the ones that involve single L2 (pessimistic proof) and L1 network. They are found in the

test/bats/ppfolder. - the ones that involve two L2 (pessimistic proof) and single L1 network. They are found in the

test/bats/pp-multifolder. Reusable helper functions are placed in thetest/bats/helpersfolder and they consist of sending and claiming bridge transactions, fetching proofs, sending transactions, querying contracts etc. Most of the functions rely on the cast command from Foundry.

Single L2 network

It involves single L2 network (and single L1 network), that are attached to the same agglayer.

Transfer message

Bridges message from L1 to L2, by invoking bridgeMessage function on the bridge contract and then claiming once the global exit root is injected to the destination L2 network.

Native gas token deposit to WETH

Bridges and claims native token from L1 to L2, that is mapped to the WETH token on L2.

Test Bridge APIs workflow

Bridges the native token from L1 to L2 and then invokes the aggkit bridge service endpoints to verify they are working as expected: bridge_getBridges, bridge_l1InfoTreeIndexForBridge, bridge_injectedInfoAfterIndex and bridge_claimProof.

Custom gas token deposit L1 -> L2

Bridges custom gas token, that pre-exists on L1 and is mapped to a native token on L2, claims it on the L2 and asserts that the native token balance has increased when settled on L2.

Custom gas token withdrawal L2 -> L1

Bridges and claims native token on L2 network, that is pre-deployed and mapped to custom gas token on an L1 network and asserts that the gas token balance for the receiver address has increased after it got claimed on L1 network.

ERC20 token deposit L1 -> L2

It deploys the ERC20 token on the L1 and bridges and claims it to the L2. In this process of claiming the bridge, a token representation of given ERC20 token is automatically deployed on the L2.

Two L2 networks

It involves two L2 networks (and single L1 network), that are attached to the same agglayer.

Test L2 to L2 bridge

It bridges native tokens from L1 to both L2 networks and claims them. Afterwards, it bridges from L2 (PP2) to L2 (PP1) network and claims it on the destination network.

Common configuration

SignerConfig

The SignerConfig struct is the primary configuration object used to initialize a signer. It’s defined in the go_signer library and specifies how and where cryptographic signing operations are performed.

The configuration supports multiple signer types. To use it, set the desired signer type in the Method field. The remaining configuration parameters will vary depending on the selected method.

The main methods are:

Keystore (local)

Use this method to sign with a local keystore file.

| Name | Type | Example | Description |

|---|---|---|---|

| Method | string | local | Must be local |

| Path | string | /opt/private_key.kestore | full path to the keystore |

| Password | string | xdP6G8gV9PYs | password to unlock the keystore |

Example:

[AggSender]

AggsenderPrivateKey = { Method="local", Path="/opt/private_key.kestore", Password="xdP6G8gV9PYs" }

Google Cloud KMS (GCP)

Use this method to sign using the Google Cloud KMS infrastructure.

| Name | Type | Example | Description |

|---|---|---|---|

| Method | string | GCP | Must be GCP |

| KeyName | string | projects/your-prj-name/locations/your_location/keyRings/name_of_your_keyring/cryptoKeys/key-name/cryptoKeyVersions/version | id of the key in Google Cloud |

Example:

[AggSender]

AggsenderPrivateKey = { Method="GCP", KeyName="projects/your-prj-name/locations/your_location/keyRings/name_of_your_keyring/cryptoKeys/key-name/cryptoKeyVersions/version"}

Amazon Web Services KMS (AWS)

Use this method to sign using the AWS KMS infrastructure. The key type must be ECC_SECG_P256K1 to ensure compatibility.

| Name | Type | Example | Description |

|---|---|---|---|

| Method | string | AWS | Must be AWS |

| KeyName | string | a47c263b-6575-4835-8721-af0bbb97XXXX | id of the key in AWS |

Example:

[AggSender]

AggsenderPrivateKey = { Method="AWS", KeyName="a47c263b-6575-4835-8721-af0bbb97XXXX"}

Others

Additional signing methods are available. For a complete list and detailed configuration options, please refer to the go_signer library documentation (v0.0.7)

ClientConfig

The ClientConfig structure configures the gRPC client connection. It includes the following fields:

| Field Name | Type | Description |

|---|---|---|

| URL | string | The URL of the gRPC server |

| MinConnectTimeout | types.Duration | Minimum time to wait for a connection to be established |

| RequestTimeout | types.Duration | Timeout for individual requests |

| UseTLS | bool | Whether to use TLS for the gRPC connection |

| Retry | *RetryConfig | Retry configuration for failed requests |

RetryConfig

The RetryConfig structure configures the retry behavior for failed gRPC requests:

| Field Name | Type | Description |

|---|---|---|

| InitialBackoff | types.Duration | Initial delay before retrying a request |

| MaxBackoff | types.Duration | Maximum backoff duration for retries |

| BackoffMultiplier | float64 | Multiplier for the backoff duration |

| MaxAttempts | int | Maximum number of retries for a request |

| Excluded | []Method | List of methods excluded from retry policies |

Example:

[AggSender]

[AggSender.AgglayerClient]

URL = "http://localhost:9000"

MinConnectTimeout = "5s"

RequestTimeout = "300s"

UseTLS = false

[AggSender.AgglayerClient.Retry]

InitialBackoff = "1s"

MaxBackoff = "10s"

BackoffMultiplier = 2.0

MaxAttempts = 16

Method

The Method type represents a gRPC method configuration with the following fields:

| Field Name | Type | Description |

|---|---|---|

| ServiceName | string | The gRPC service name (including package) |

| MethodName | string | The specific gRPC function name (optional) |

This type is used to specify methods that should be excluded from retry policies. The ServiceName field is required and should include both the package and service name.

Example:

[AggSender]

[AggSender.AgglayerClient]

[AggSender.AgglayerClient.Retry]

Excluded = [

{ Service = "agglayer.Agglayer", Method = "SubmitCertificate" },

{ Service = "agglayer.Agglayer", Method = "GetStatus" }

]

RateLimitConfig

The RateLimitConfig structure configures rate limiting behavior. If either NumRequests or Interval is set to 0, rate limiting is disabled.

| Field Name | Type | Description |

|---|---|---|

| NumRequests | int | Maximum number of requests allowed within the interval |

| Interval | types.Duration | Time window for rate limiting |

Example:

[AggSender]

[AggSender.MaxSubmitCertificateRate]

NumRequests = 20

Interval = "1h"

When rate limiting is enabled, if the number of requests exceeds NumRequests within the specified Interval, the system will wait until the next interval before allowing more requests. This helps prevent overwhelming the system with too many requests in a short period.

RPCClientConfig

RPCClientConfig configures the JSON-RPC client used to connect to Ethereum nodes. It is used in multiple places, notably [L1NetworkConfig.RPC] (L1 node) and [Common.L2RPC] (L2 node).

| Field | Type | Default | Description |

|---|---|---|---|

URL | string | — | JSON-RPC endpoint URL |

Mode | string | "" | Client mode: "" or "basic" for standard nodes, "op" for Optimism nodes |

HashFromJSON | bool | false | When true, fetches block hashes via JSON-RPC (eth_getBlockByNumber). When false, computes them locally from the RLP-encoded header (go-ethereum default). Enable this for nodes where RLP hashing does not match the canonical block hash |

BatchBlockHeaderRetrieval | bool | true | When true, uses JSON-RPC batch requests to fetch block headers in bulk (faster). Disable if the node does not support batch calls |

RetryMode | string | "backoff" | Retry strategy: "backoff" for exponential backoff, "delays" for fixed delay list, "" for no retries |

MaxRetries | int | 5 | Maximum number of retry attempts |

InitialBackoff | duration | 5s | Initial wait time before the first retry (backoff mode) |

MaxBackoff | duration | 60s | Maximum wait time between retries (backoff mode) |

BackoffMultiplier | float64 | 2.0 | Multiplier applied to the backoff duration on each retry |

Delays | []duration | [] | Explicit list of wait times for each retry attempt (delays mode) |

Example:

[L1NetworkConfig.RPC]

URL = "http://localhost:8545"

Mode = "basic"

HashFromJSON = false

BatchBlockHeaderRetrieval = true

RetryMode = "backoff"

MaxRetries = 5

InitialBackoff = "5s"

MaxBackoff = "60s"

BackoffMultiplier = 2.0

[Common.L2RPC]

URL = "http://localhost:8123"

Mode = "basic"

HashFromJSON = true

BatchBlockHeaderRetrieval = true

RetryMode = "delays"

MaxRetries = 6

Delays = ["1s", "2s", "5s", "10s", "30s", "60s"]